The CoreSAEP project (Computational Reasoning for Socially Adaptive Electronic Partners, 2014-2022) is about software that understands norms and values of people.

At least this was the lay person’s summary I came up with at the start of the project. While it made for a nice tagline, I later started using the somewhat humbler phrasing of software that takes into account norms and values of people. The question whether digital technology can ever be considered to truly understand people is I believe an open question that we are nowhere near to answering. A further needed refinement I have come to realize is that our focus is on individuals and their behavior, leading to the following phrasing of what we study in the project: software that takes into account personal norms and values.

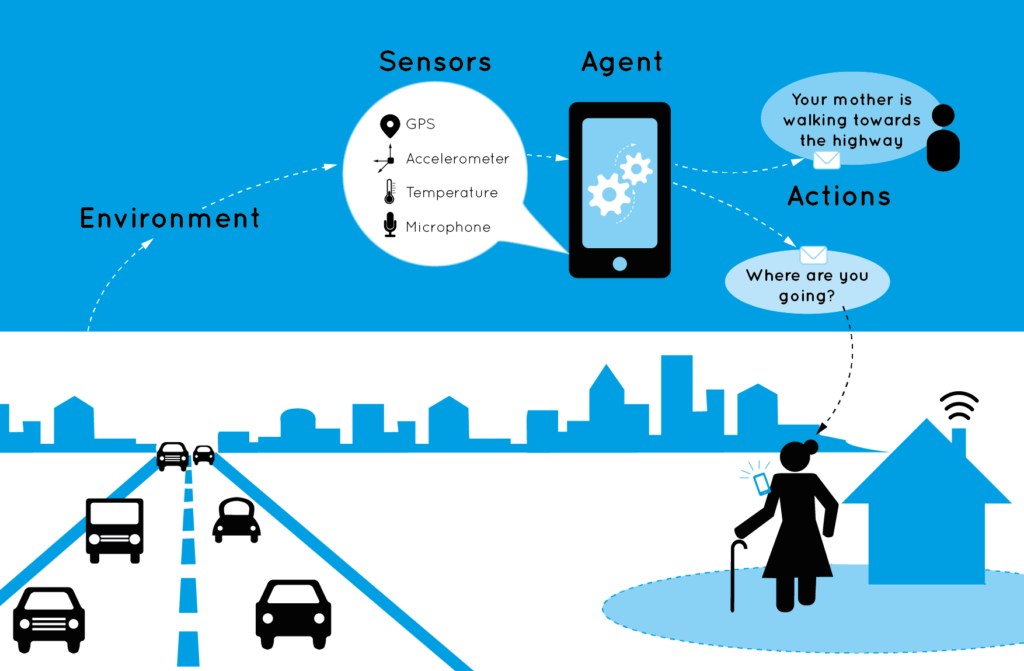

More on these and other insights we have obtained throughout the project can be found in the short overview video I made for the concluding symposium of the project: ‘Being human in the digital society: on technology, norms and us’ (slides). I also discuss some of these questions in a piece (in Dutch) for the platform Beste-ID.nl: In gesprek met machines / in conversation with machines. Furthermore, we have made an ‘infograpic’ that presents the project’s results in an accessible way (CoreSAEP Infographic English / CoreSAEP Infographic Dutch, illustrations and design by Just Julie).

Human-machine alignment via person-centered models, methods and meaning making from University of Twente on Vimeo.

The PhD candidate of the CoreSAEP project, Dr. Ilir Kola, successfully defended his PhD thesis Enabling Social Situation Awareness in Support Agents (URL) on November 21st, 2022, at TU Delft.

NWO Vidi Personal Grant

The CoreSAEP project is a Vidi personal grant (800K), funded by the Dutch funding agency NWO. It was awarded to me in 2014, however due to illness I wasn’t able to start until 2017. It is a 5-year project, which was concluded in 2022. More information about the CoreSAEP project and the team can be found on the CoreSAEP website. The project vision is further described in our AAMAS vision paper Creating Socially Adaptive Electronic Partners: Interaction, Reasoning and Ethical Challenges (2015).

Organisation-Aware Agents

The project has its origins in largely curiosity-driven research I initiated on autonomous software agents that are capable of reasoning about the organisation in which they function: organisation-aware agents (2009). I was interested in investigating whether my PhD research on programming (single) software agents could be connected with a large body of work in the multiagent systems area on the specification of agent organisations. These agent organisations are aimed at coordinating and regulating multiagent systems in order to make them function more effectively, inspired by the functioning of human organisations. However, there was very little work on how individual agents could somehow “understand” these organisational specifications and take them into account in their decision making.

I realised that a crucial step in ensuring that agents properly interpret these organisational specifications, is to specify in a precise way what these specifications mean in terms of expected agent behaviour. That is, we needed a formal specification and semantics. This led to a collaboration with researchers from the MOISE agent organization framework, from which the paper Formalizing Organizational Constraints: A Semantic Approach (2010) resulted. However, while further studying organisational agent frameworks, I realised things get quite complex quite quickly due to their large number of concepts, such as groups, roles and their relations, goals and missions, etc. Therefore, to make progress without getting lost in this complexity, I decided to focus for the time being solely on the concept of norms as descriptions of social expectations on agent behaviour.

Socially Adaptive Electronic Partners (SAEPs)

What finally laid the foundations for the awarded Vidi project were three further important insights:

- Norms & Temporal logic: Expectations on agent behaviour can naturally be formulated in temporal logic, as we did in the above mentioned 2010 paper on MOISE. These temporal specifications can then be interpreted as norms describing desired agent behaviour. Agents that adapt to norms may then be specified using techniques from executable temporal logic, which is used to generate behaviour from a temporal specification. In collaboration with dr. Louise Dennis and prof. Michael Fisher from Liverpool and dr. Koen Hindriks (Delft) we worked out this idea in two publications on norm compliance (2013, 2015).

- Norms & Supportive technology: Agents‘ ability to adapt to norms is especially important when these agents support people in their daily lives. Without such adaptation, software is rigid which leads to violation of important norms of people. This insight was inspired by research in the Interactive Intelligence section led by prof. Catholijn Jonker at TU Delft on Human-Agent Robot Teamwork (HART).

- Norms & Human values: The underlying reason why it is important for supportive technology to attempt to adhere to people’s norms is that these norms reflect people’s values – i.e., what people find important in life. We obtained this insight through the research of my PhD student Alex Kayal who worked on the COMMIT/ project on normative social applications (PhD thesis (2017)), in particular focusing on location sharing between parents and children. The importance of values emerged from a user study (2013) we conducted to better understand this application context.

These ingredients, i.e., the technical approach, the type of application, and the “why” behind all of this, is what eventually led to the writing of the awarded project.