This project focuses on the development of trust during Human-Agent Interaction from a human-centered, cognitive psychological perspective. Artificial intelligence (AI) software agents and embodied agents like robots are becoming more prevalent, and also increasingly autonomous. Handing over (at least some of our) control during Human-Agent interaction will change our relation to such technology. A central question is then: under what conditions are we willing to trust such systems and what happens to that trust when our artificial “teammates” make mistakes? Current AI cannot easily adapt to new situations, lacks general world knowledge, common sense, and social skills. Yet, (semi-)autonomous agents are increasingly deployed in operational situations that are characterized by complexity, risk and uncertainty, like military missions and city traffic, with the consequence that flawless performance cannot be guaranteed. Agent failure or other unexpected behaviour can negatively affect a human’s trust in the agent. Trust is a fundamental aspect of collaboration, so strategies to repair trust are deemed crucial for long-term interactions. Consequently, this project investigates what agents can do or say to restore broken trust.

BMS Signature PhD

The project is a BMS Signature PhD position at the University of Twente (UT), awarded to Esther Kox (Esther Kox LinkedIn, Esther Kox UT profile) in 2020 via a call for research proposals from the faculty of Behavioural, Management and Social Sciences (BMS). The BMS signature PhD initiative aims to speed up the transition to a truly integrated social sciences & technology faculty. The evaluation committee looked for project that had a demonstrable link with technology and the BMSLab and encouraged novel multidisciplinary collaborations. The proposal with Esther Kox as the PhD candidate and prof. dr. José Kerstholt (UT, TNO), dr. ir. Peter de Vries (UT) and dr. M. Birna van Riemsdijk (UT) as the supervisory team was selected for funding. As of October 2020, Esther started as a PhD candidate at the UT at the department of Psychology of Conflict, Risk and Safety (PCRS).

Esther Kox’s PhD trajectory began in 2019 as part of a TNO (the Netherlands Organisation for applied scientific research) research program for the Ministry of Defense, called BIHUNT (i.e., Behavioral Impact of Human Nonhuman intelligent collaborator Teaming). The aim of this program was to investigate the consequences for the military organization of future concepts of operations in which humans are collaborating with artificial intelligent systems, such as robots or software agents that are to some extent comparable to human team members.

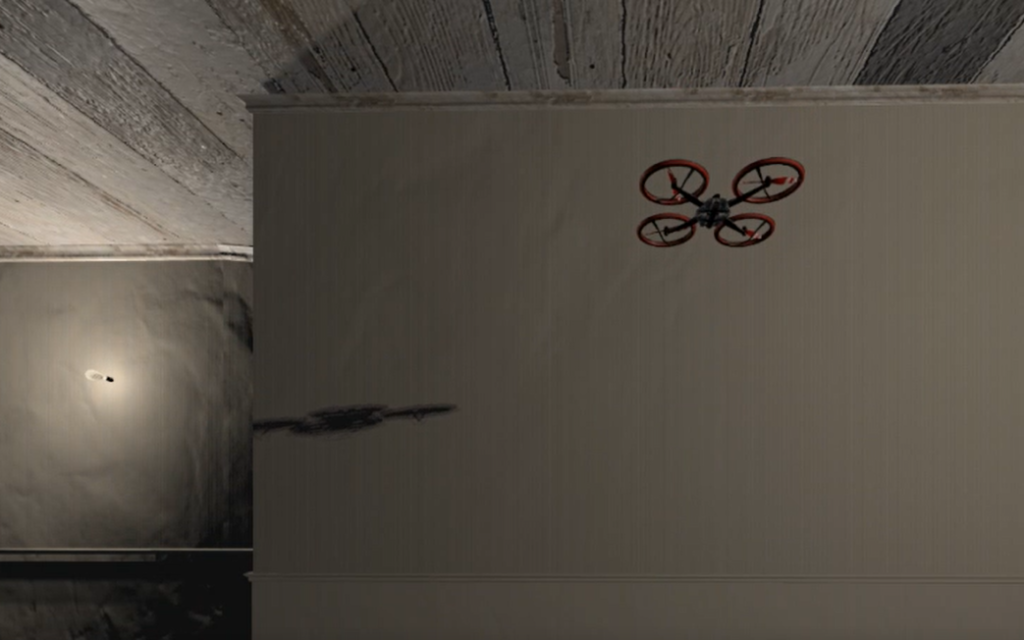

Virtual Reality Environment

Together with colleagues from both TNO (Tessa Klunder and Jonathan Barnhoorn) and the BMS lab (i.e., the lab of the social sciences faculty at the UT) (Lucia Rabago Mayer) we have created a Virtual Reality (VR) environment that allows us to research how people behave during a Human-Agent teaming task and, more specifically, how people respond to trust violations from an artificial agent.

With calibrated trust as a guiding principle, we explore what the psychosocial requirements are for maintaining trust, especially in case of agent failure.